The AI Community On Kbin

www.bloomberg.com

www.bloomberg.com

Stability AI Chief Executive Officer Emad Mostaque has resigned from the British artificial intelligence startup — a move that follows quarrels with investors and waves of senior staff departures.

trendvale.com

trendvale.com

Numerous ChatGPT alternatives offer superior solutions for your business, addressing diverse needs such as marketing, sales, ideation, revision.

www.youtube.com

www.youtube.com

Gemini is our natively multimodal AI model capable of reasoning across text, images, audio, video and code. This video highlights some of our favorite intera...

www.technologyreview.com

www.technologyreview.com

We know remarkably little about how AI systems work, so how will we know if AI becomes conscious?

Training AI models like GPT-3 on "A is B" statements fails to let them deduce "B is A" without further training, exhibiting a flaw in generalization. ([https://arxiv.org/pdf/2309.12288v1.pdf](https://arxiv.org/pdf/2309.12288v1.pdf)) **Ongoing Scaling Trends** * 10 years of remarkable increases in model scale and performance. * Expects next few years will make today's AI "pale in comparison." * Follows known patterns, not theoretical limits. **No Foreseeable Limits** * Skeptical of claims certain tasks are beyond large language models. * Fine-tuning and training adjustments can unlock new capabilities. * At least 3-4 more years of exponential growth expected. **Long-Term Uncertainty** * Can't precisely predict post-4-year trajectory. * But no evidence yet of diminishing returns limiting progress. * Rapid innovation makes it hard to forecast. TL;DR: Anthropic's CEO sees no impediments to AI systems continuing to rapidly scale up for at least the next several years, predicting ongoing exponential advances.

Paper: [https://arxiv.org/abs/2309.07124](https://arxiv.org/abs/2309.07124) Abstract: > > > Large language models (LLMs) often demonstrate inconsistencies with human preferences. Previous research gathered human preference data and then aligned the pre-trained models using reinforcement learning or instruction tuning, the so-called finetuning step. In contrast, aligning frozen LLMs without any extra data is more appealing. This work explores the potential of the latter setting. We discover that by integrating self-evaluation and rewind mechanisms, unaligned LLMs can directly produce responses consistent with human preferences via self-boosting. We introduce a novel inference method, Rewindable Auto-regressive INference (RAIN), that allows pre-trained LLMs to evaluate their own generation and use the evaluation results to guide backward rewind and forward generation for AI safety. Notably, RAIN operates without the need of extra data for model alignment and abstains from any training, gradient computation, or parameter updates; during the self-evaluation phase, the model receives guidance on which human preference to align with through a fixed-template prompt, eliminating the need to modify the initial prompt. Experimental results evaluated by GPT-4 and humans demonstrate the effectiveness of RAIN: on the HH dataset, RAIN improves the harmlessness rate of LLaMA 30B over vanilla inference from 82% to 97%, while maintaining the helpfulness rate. Under the leading adversarial attack llm-attacks on Vicuna 33B, RAIN establishes a new defense baseline by reducing the attack success rate from 94% to 19%. > > Source: [https://old.reddit.com/r/singularity/comments/16qdm0s/rain\_your\_language\_models\_can\_align\_themselves/](https://old.reddit.com/r/singularity/comments/16qdm0s/rain_your_language_models_can_align_themselves/)

[https://arxiv.org/abs/2309.11495](https://arxiv.org/abs/2309.11495) **Abstract** Generation of plausible yet incorrect factual information, termed hallucination, is an unsolved issue in large language models. We study the ability of language models to deliberate on the responses they give in order to correct their mistakes. We develop the Chain-of-Verification (CoVe) method whereby the model first (i) drafts an initial response; then (ii) plans verification questions to fact-check its draft; (iii) answers those questions independently so the answers are not biased by other responses; and (iv) generates its final verified response. In experiments, we show CoVe decreases hallucinations across a variety of tasks, from list-based questions from Wikidata, closed book MultiSpanQA and longform text [https://i.imgur.com/TDXcdMI.jpeg](https://i.imgur.com/TDXcdMI.jpeg) [https://i.imgur.com/XfRVxJT.jpeg](https://i.imgur.com/XfRVxJT.jpeg) **Conclusion** We introduced Chain-of-Verification (CoVe), an approach to reduce hallucinations in a large language model by deliberating on its own responses and self-correcting them. In particular, we showed that models are able to answer verification questions with higher accuracy than when answering the original query by breaking down the verification into a set of simpler questions. Secondly, when answering the set of verification questions, we showed that controlling the attention of the model so that it cannot attend to its previous answers (factored CoVe) helps alleviate copying the same hallucinations. Overall, our method provides substantial performance gains over the original language model response just by asking the same model to deliberate on (verify) its answer. An obvious extension to our work is to equip CoVe with tool-use, e.g., to use retrieval augmentation in the verification execution step which would likely bring further gains. Source: [https://old.reddit.com/r/singularity/comments/16qcdsz/research\_paper\_meta\_chainofverification\_reduces/](https://old.reddit.com/r/singularity/comments/16qcdsz/research_paper_meta_chainofverification_reduces/)

www.businessinsider.com

www.businessinsider.com

How Daron Acemoglu, one of the world's most respected experts on the economic effects of technology, learned to start worrying and fear AI.

www.businessinsider.com

www.businessinsider.com

Will everything you learned in college be replaced by ChatGPT? The CEO of job site Indeed says it's not out of the question.

benmyers.dev

benmyers.dev

cross-posted from: https://lemmy.ml/post/5325676 > The past few months have launched generative AI models into the public eye, and everyone seems to have a take on it. Generative AI models such as large language models (LLMs) and AI art generators consume vast amounts of aggregated content, determine similarities between that content, and, when prompted, produce statistically likely, plausible-seeming output. > > The current state of generative AI is environmentally disastrous and built on the backbone of labor exploitation, particularly in the global south. Large language models' disregard for the truth is, at this point, well-documented. > > Technology is not neutral. Leveraging and normalizing generative AI is not a neutral act.

www.pcworld.com

www.pcworld.com

The latest updates to Google’s generative AI chat bot lets it dig through your personal email, documents, and more—so you can get things done faster.

www.cnbc.com

www.cnbc.com

Many American CEOs say they're worried about their workplace's lack of AI skills, a new survey of C-suite executives and workers found. Here's why.

www.newsweek.com

www.newsweek.com

The pursuit of the most advanced AI—human-like artificial general intelligence—has prompted concerns among experts about potential dangers if it runs amok.

www.vox.com

www.vox.com

63 percent of Americans want regulation to prevent artificial general intelligence, or AGI, which OpenAI aims to build.

www.pcworld.com

www.pcworld.com

These clever AI tools can have a big impact by using elaborate models to tackle demanding tasks. The nine programs presented here have something in common besides AI: they are freely available.

www.businessinsider.com

www.businessinsider.com

Stephen Fry said he was "shocked" when AI cloned his voice because it could "have me read anything from a call to storm Parliament to hard porn."

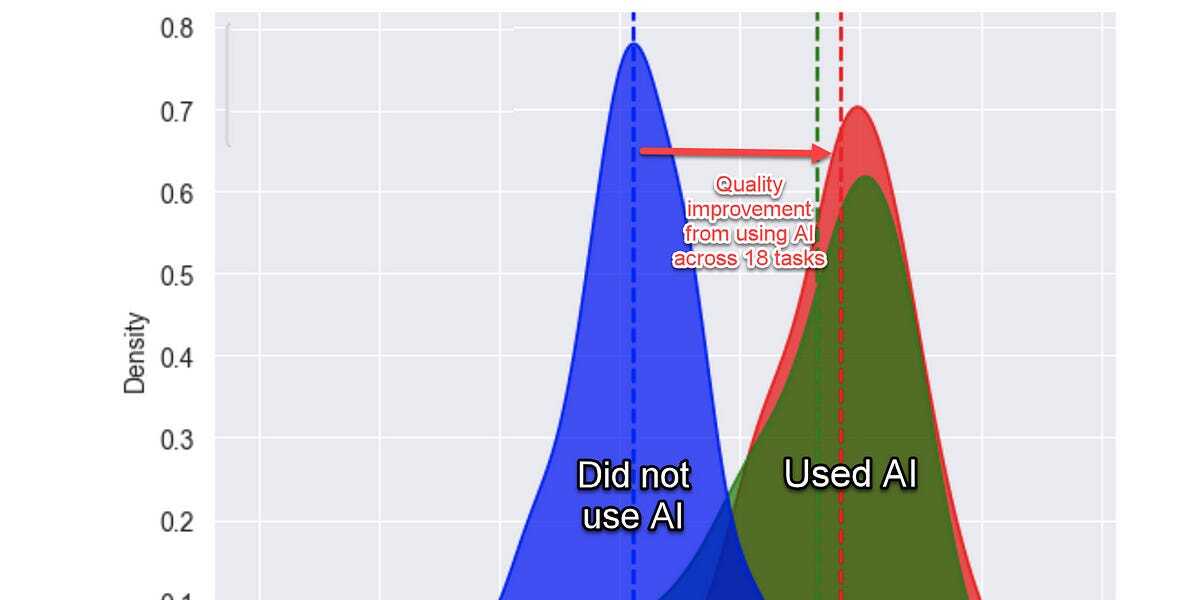

www.oneusefulthing.org

www.oneusefulthing.org

I think we have an answer on whether AIs will reshape work....

techcrunch.com

techcrunch.com

Ethical AI requires a deep understanding of what there is, what we want, what we think we know, and how intelligence unfolds.

techcrunch.com

techcrunch.com

Microsoft has open sourced EvoDiff, an AI system and framework that can generate proteins without needing a protein sequence.

techcrunch.com

techcrunch.com

Superorder, a startup developing a platform to help restaurants maintain their online presence, has raised $10 million in a funding round.

www.wired.com

www.wired.com

Surveys suggest teachers use generative AI more than students, to create lesson plans or more interesting word problems. Educators say it can save valuable time but must be used carefully.

www.reuters.com

www.reuters.com

Alphabet's Google has given a small group of companies access to an early version of Gemini, its conversational artificial intelligence software, The Information reported on Thursday, citing people familiar with the matter.

In recent research AI has done a credible job at diagnosing health complaints. But should consumers trust unregulated bots with their health care? Doctors see trouble brewing.

www.artificialintelligence-news.com

www.artificialintelligence-news.com

A survey by GitLab has shed light on the views of developers on the landscape of AI in software development.

www.artificialintelligence-news.com

www.artificialintelligence-news.com

The UK's AI economy is soaring, valued at an impressive £1.36 trillion ($1.7 trillion) and showing no signs of slowing.

www.artificialintelligence-news.com

www.artificialintelligence-news.com

The major event – set to be held at Bletchley Park, home of Alan Turing and other Allied codebreakers during the Second World War – aims to address the pressing challenges and opportunities presented by AI development on both national and international scales.

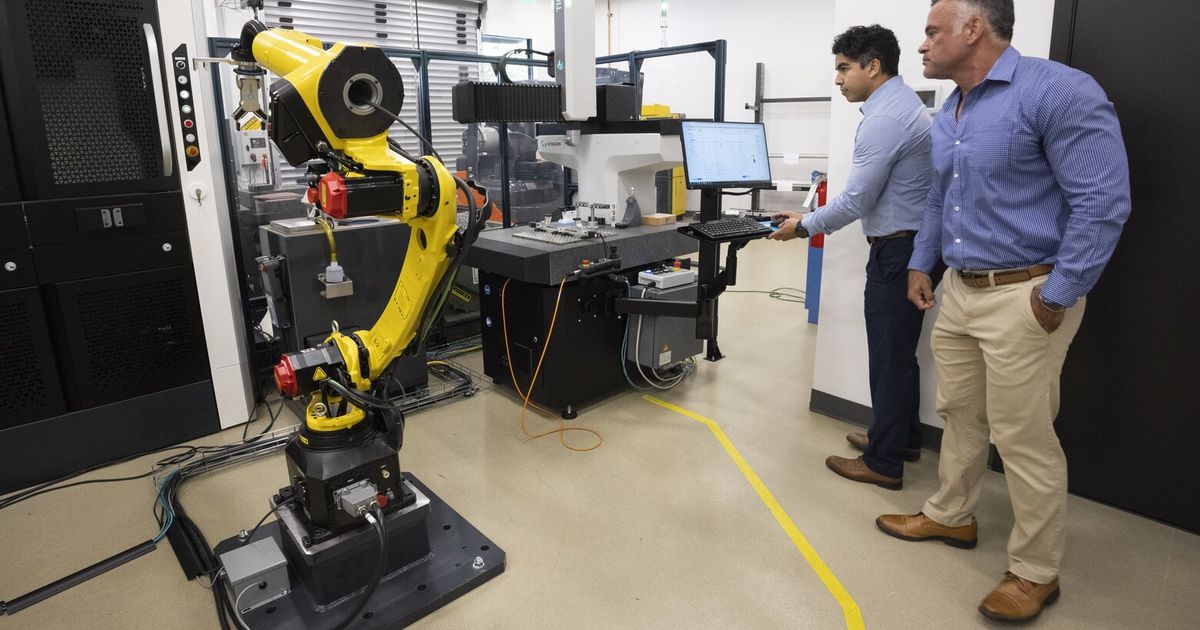

www.technologyreview.com

www.technologyreview.com

Large language models are the next big thing for robotics, making cars and other robots quicker to train and easier to control (if you trust them).

thebulletin.org

thebulletin.org

Advances in artificial intelligence have prompted extensive public concern about its capacity to contribute to the spread of misinformation, bias, and cybersecurity breaches—and its potential existential threat to humanity. But, if anything, AI can aid human beings in making decisions aimed at improving social equality, safety, productivity—and mitigate some existential threats.

A breaking down of how 6 of the most important EdTech companies are thinking about AI: - Duolingo - Powerschool - Coursera - Docebo - Instructure - Nerdy

venturebeat.com

venturebeat.com

In a VentureBeat Q&A, Princeton University's Arvind Narayanan and Sayash Kapoor, authors of the upcoming "AI Snake Oil," discuss AI hype.

Siggraph 2023, Nvidia improves on their previous research into controllable, natural movement learnt from unlabelled data. Code and paper available.

Estimates show that without significant interventions, AI models could consume more energy than the entire human workforce by 2025, considerably impacting global carbon reduction goals

www.pixelrefresh.com

www.pixelrefresh.com

Sam takes us on a journey of how A.I. can create an image using its collection of pictures and artwork and construct something we perceive as unique.

The AI Community On Kbin

!ArtificialIntelligence@kbin.socialWelcome to m/ArtificialIntelligence, the place to discuss all things related to artificial intelligence, machine learning, deep learning, natural language processing, computer vision, robotics, and more. Whether you are a researcher, a developer, a student, or just a curious person, you can find here the latest news, articles, projects, tutorials, and resources on AI and its applications. You can also ask questions, share your ideas, showcase your work, or join the debates and challenges. Please follow the rules and be respectful to each other. Enjoy your stay!